LOAD TIME

PROJECT SUMMARY

CHALLENGE

Create a system that can handle high amounts of web traffic and scale to varying loads

PRODUCT

A social platform

DELIVERABLES

We’ve created a container-based system, improved user experience, and migrated the platform to a new systemINTRO

Choosing the right software development architecture for the digital product is art. It should be scalable, reusable, flexible, and able to handle peak loads. Many web applications, including eCommerce, eBanking, and social networking, face huge influxes of users. Some of the loads can be predictable (when you launch a certain marketing campaign), some can be seasonal (like holidays).

And another thing is a long interface loading time.

Given that nowadays people are used to quickly obtain the information they need and expect the web page to load within 2 seconds or even less, they can get annoyed and stop using your product just because of the long loading time.

To avoid the product’s poor performance, consider the creation of a scalable software system that can handle peak loads.

OVERVIEW

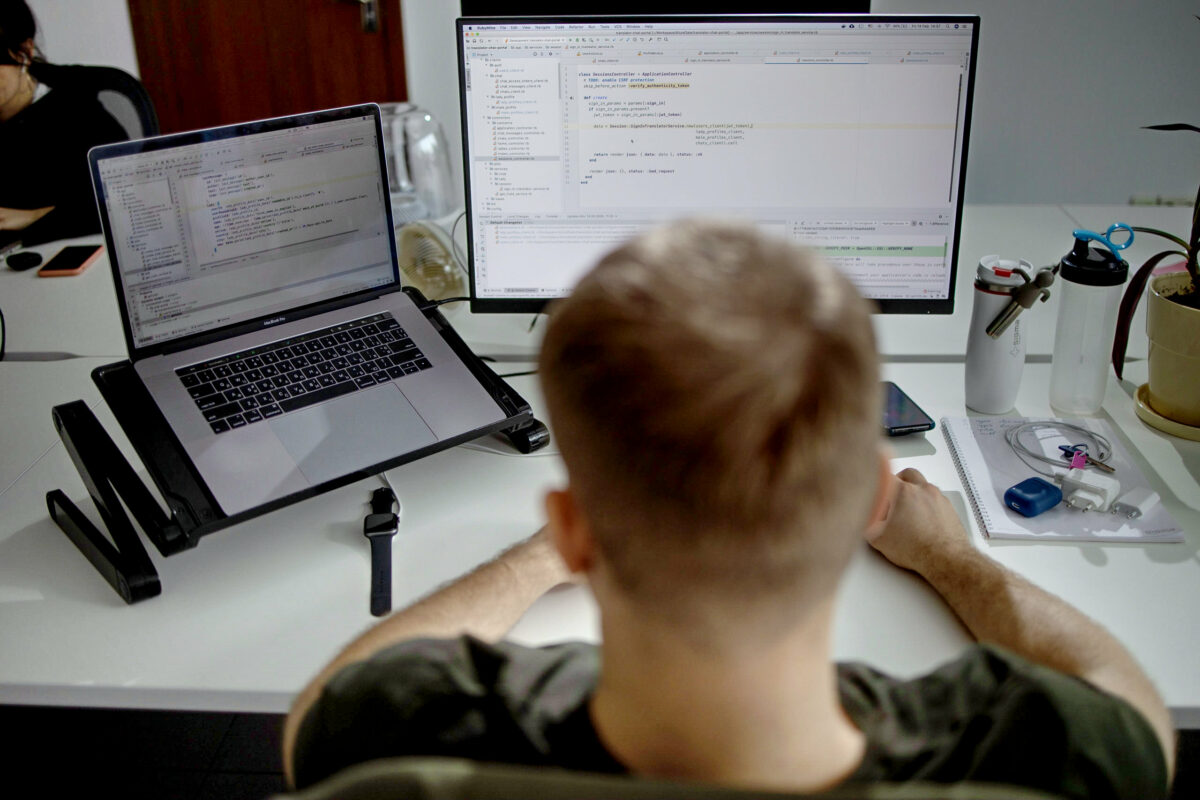

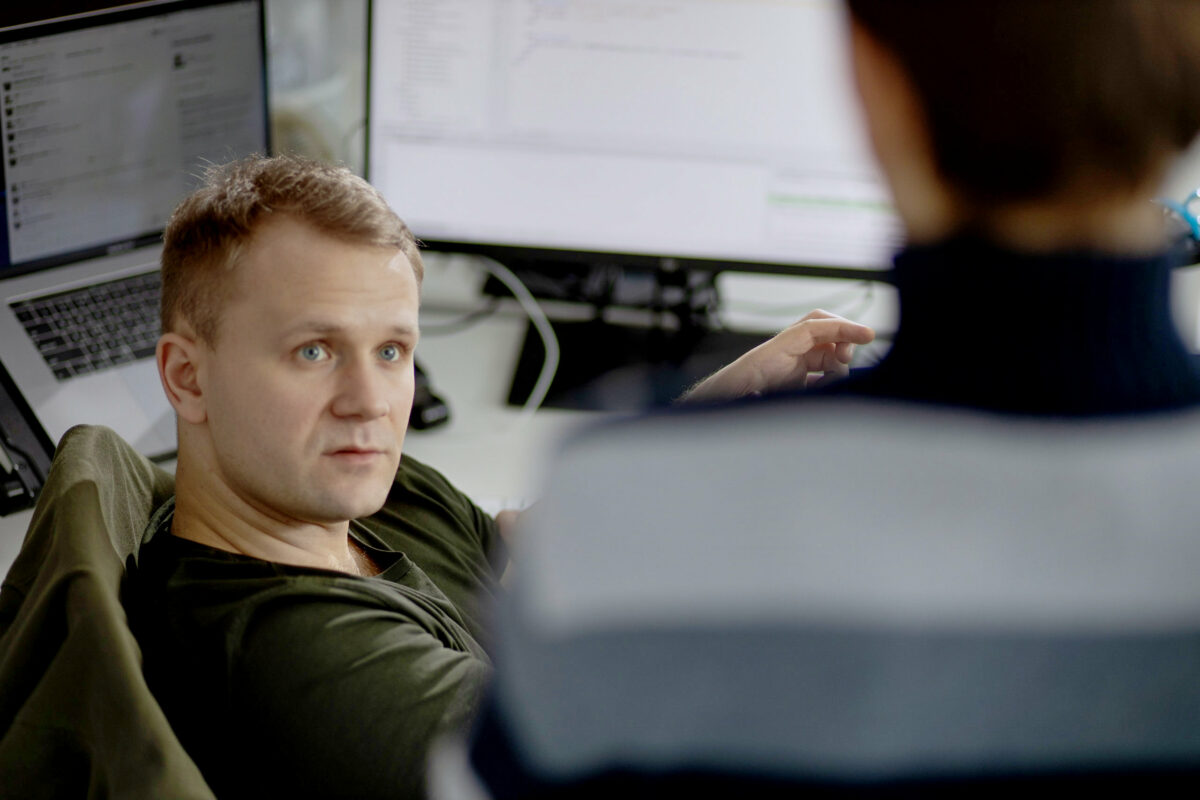

For our client, we developed a social platform. The challenge was to deliver a quality and stable product able to handle peak loads.

CHALLENGE

The client’s company outgrew the capabilities of its existing system, which was initially used to jump-start their business. In general, the system worked well, except for a few hours a day when large increases in demand and spikes in traffic were expected.

In addition, during the traffic peaks, the interface loading time was about 15 seconds, which is just inexcusable with regard to user experience.

For this reason, the client required an infrastructure that would be able to manage high amounts of web traffic and scale seamlessly to varying loads.

SOLUTION

Our team started with the planning and analysis phase, which focused on:

- Providing a short-term solution at the initial stage.

- Improving the customer experience.

- Deploying Kubernetes and ensuring a smooth migration from an initial solution to the final one.

As a short-term solution, we suggested increasing the number of virtual workstations. Since the project was hosted in the cloud, we rented payment processing time and decided to increase the number of workstations to offload the system in a time of peak loads.

A short-term solution was suggested as a way to retain users. At the same time, we were working on a more effective solution that would allow us to withstand peak loads, cut costs, and gather data regarding system performance.

We realized that we needed more resources so that they could handle the load. To meet this challenge, we turned to Kubernetes, an open-source container orchestration system designed to handle automated deployments and scaling using load balancing. Kubernetes allows us to optimize resource utilization, control and automate application deployments and updates, and lower deployment costs. As traffic increased, we scaled up the number of containers and scaled down when the demand was less. In addition to that, the Kubernetes API server generates metrics about the state of objects such as deployments, nodes, and pods. It helps DevOps engineers to monitor system performance and ensure that the infrastructure stays in the know of how each component is performing and whether they are achieving the availability and performance expectations that they are supposed to.

Our task was to build a microservice architecture, where each microservice is responsible for some domain parts of the system. It would allow us to meet the rising demand for a platform and distribute the load.

VALUE DELIVERED

Let's create something

awesomeOUR RECENT PROJECTS

REDUCE REGRESSION TIME

REDUCE REGRESSION TIME

- social platform

- test suite optimization

- automated regression testing

- test-driven development (TDD)

REAL-TIME DATA SYNCHRONIZATION

REAL-TIME DATA SYNCHRONIZATION

- productivity app

- debugging

- data synchronization

- offline-first approach

INCREASE APP ENGAGEMENT

INCREASE APP ENGAGEMENT

- performance tracking app

- mobile-first development

- data entry automation

- software integration